Enterprise AI adoption has moved past the experimentation phase. Across industries, organizations are deploying AI in production — embedded in applications, surfaced through copilots, and woven into workflows that teams depend on every day. That’s real progress. But it’s also where a different kind of problem begins.

Because the more AI expands across the business, the harder it becomes to manage. Who has access to what models? How much is it actually costing? What happens when sensitive data ends up in a prompt that wasn’t supposed to be there? These aren’t hypothetical concerns — they’re the operational realities that organizations are running into as AI scales from a handful of pilots to something much larger.

And the numbers reflect this. According to Gartner’s AI services forecast, “In 2024, 60% of GenAI POCs were abandoned upon completion. In 2029, this will be reduced to 35%.” Not because the technology failed, but because the environment around it wasn’t ready. Weak governance, unpredictable costs, and a lack of visibility into how AI is actually being used — these are the factors quietly undermining initiatives that should be succeeding.

The good news is that these aren’t unsolvable problems. They’re infrastructure problems. And infrastructure is something we know how to fix.

The real challenge isn’t the AI — it’s the environment around it

Most organizations aren’t struggling to find AI tools worth using. The market for AI models, APIs, and services is deep and growing fast. The challenge is everything that surrounds those tools: how requests are routed, how policies are applied, how usage is tracked, how costs are controlled, and how security is enforced — consistently, across every team, application that touches AI, and model provider.

Without a consistent control layer, what tends to happen is a patchwork of protections and configurations. Different teams connect to different models in different ways. Sensitive data flows through prompts that no one is inspecting universally. Costs climb in unpredictable ways as usage spikes. Security and compliance teams ask questions that no one has good answers to. And as the footprint grows, so does the exposure.

This is the governance gap — and it’s the single biggest threat to the long-term success of enterprise AI.

AI governance starts at the infrastructure layer

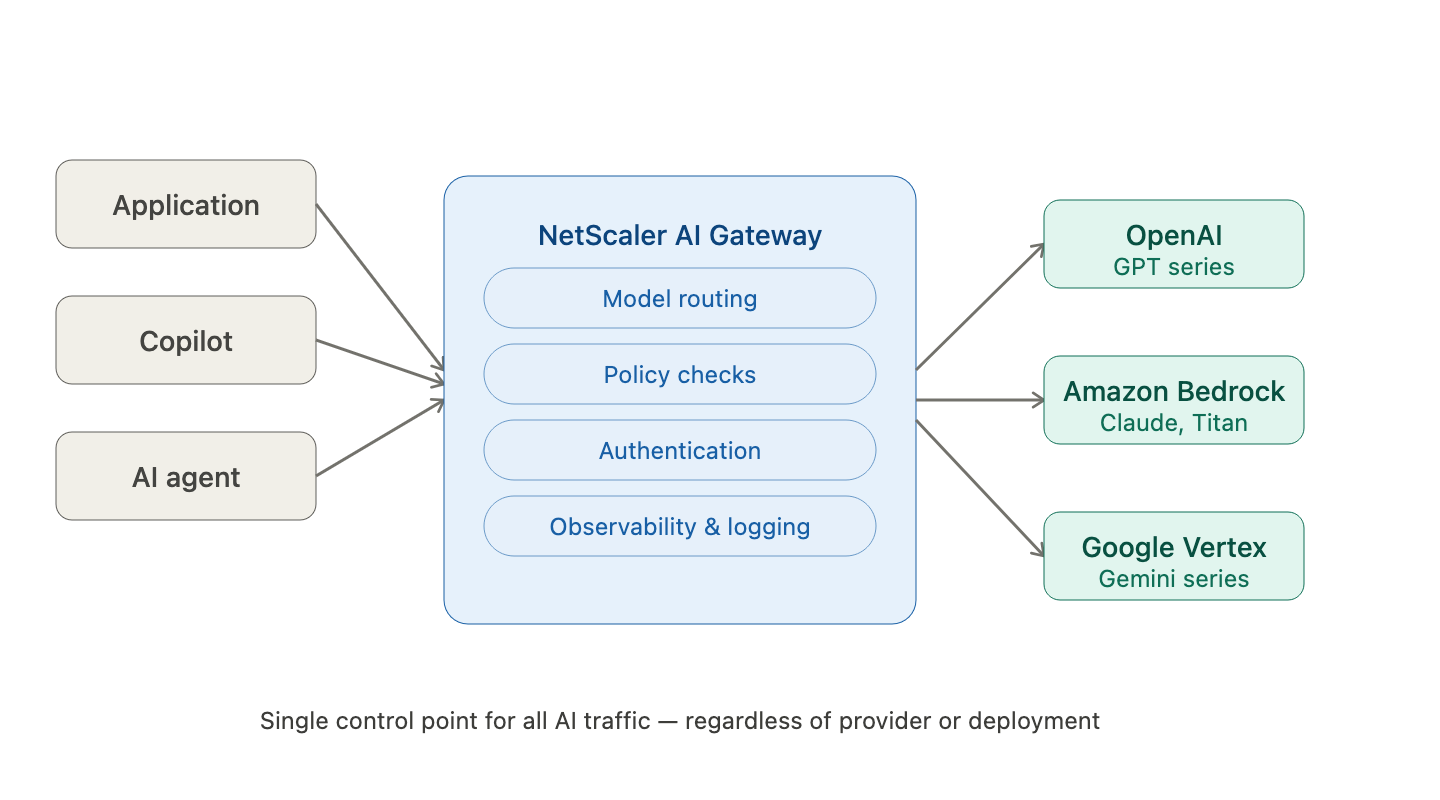

The organizations that are scaling AI successfully share a common approach: they’ve established a centralized control layer for how AI traffic flows across their environment. Instead of managing each AI connection individually, they route all AI interactions through a single point of governance — one that can enforce policy, manage cost, protect sensitive data, and provide visibility, regardless of which model is being used or where it’s deployed.

That’s exactly the problem the Citrix NetScaler AI Gateway is built to solve.

NetScaler AI Gateway gives enterprises a single control point for monitoring, managing, and governing AI usage across the organization. It applies the same proven infrastructure principles that NetScaler has used to manage application traffic for decades — now extended to the AI layer. The result is an environment where AI can scale without the risk of sprawl: governed, observable, and cost-controlled from the start.

What that looks like in practice

From an operations standpoint, NetScaler AI Gateway simplifies how AI is integrated into applications and workflows. Consider something as common as switching between model providers or models — moving from one vendor’s offering to another, or swapping in a newer model as the landscape evolves. Without a centralized control layer, that change has to be managed across every application and team that touches AI. With AI Gateway, the switching happens behind a single, unified endpoint. Applications and end users stay connected without disruption, and your teams aren’t buried in integration rework every time the AI landscape shifts.

From a performance and efficiency standpoint, NetScaler AI Gateway optimizes how AI requests are handled. It routes requests intelligently based on performance, ensures workloads are distributed evenly, and provides automatic failover when a model or service becomes unavailable. The business outcome is straightforward: more consistent AI performance at scale, without requiring manual tuning or over-provisioning.

From a security and governance standpoint, NetScaler AI Gateway enforces access controls and inspects traffic for sensitive data before it reaches a model. Policies apply consistently, regardless of which application is making the request or which team owns it. This matters across the board — from healthcare and financial services workflows where regulated data is at stake, to something as ubiquitous as developer tooling. As coding assistants become a standard part of how engineering teams work, those tools need the same governance treatment as any other AI service. AI Gateway provides a secure, controlled access point for coding assistants, ensuring developers get the productivity benefits without the organization taking on unmanaged risk.

And from a cost standpoint, NetScaler AI Gateway gives organizations the visibility and controls they need to manage one of the more counterintuitive aspects of AI at scale: the fact that costs are driven by consumption patterns that are often invisible until it’s too late. By tracking token usage and enforcing limits, organizations can prevent runaway spend and allocate AI resources fairly across teams — without degrading performance for anyone.

AI should be a managed enterprise service — not a collection of unmanaged integrations

The organizations that will get the most out of AI over the long term are the ones treating it like any other enterprise-grade capability: with the appropriate infrastructure, governance, and controls in place from the start.

That’s not about slowing down AI adoption. It’s about making sure the investments you’re making today hold up as usage grows. Building AI on a governed, observable, controlled infrastructure foundation isn’t a constraint — it’s what makes scale possible.

NetScaler AI Gateway is built for exactly that moment: when AI is no longer an experiment, and your infrastructure needs to match the ambition.

Ready to see it in action? Watch our demo videos to see how AI Gateway governs AI traffic and controls cost in real enterprise environments.