So good evening/morning/afternoon to all of you!

Here I am with no complaints as I have time to provide a final update to this blog I originally posted in early May. The reason for the staggered updates to this is simple:

– Like all I know, I have been busy

– Wonderful questions and conversations spawned from this entry

– I needed the time to organize my thoughts for proper answers

– Making the Comments Section an Appendix is just poor taste

Now, without further delay (as I have another blog to write today), I present “Ditching Cirrus: Part III”

The Exposition

For many reasons – not exclusive to XenServer – the Cirrus video driver has been a staple for many-a-project wherein a basic, somewhat agnostic video driver is needed. When you create a VM in XenServer (you guessed it) the Cirrus video driver is used and it does the job.

I had been working on a project with my mentor related to an eccentric OS I have used for quite a long time (no hints here), but I needed a way to get more real estate to test HID device pointing and general video experiences. This led me to find that at some point in our upstream code there were platform (virtual machine) options that allowed an administrator to ditch the simple Cirrus driver for a more standard VGA driver.

… And so, I did it. It is awesome. It is not GPU Pass through. It is not a hack. It is a valuable way to achieve 32bpp color in Windows, obtain higher resolutions, etc.

Why?

Did I say it was awesome? Let me show the resolution options for Windows 7 Enterprise (64-bit) with Cirrus and then with VGA (same VM – different screenshots after swapping out Cirrus for VGA):

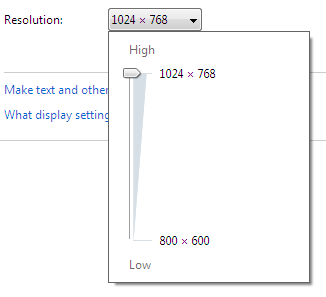

First up… Cirrus!

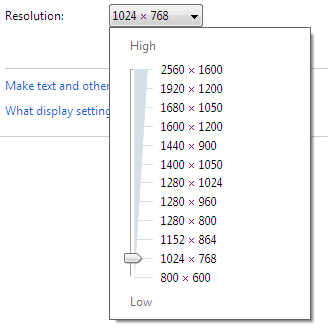

Now, Ladies and Gentlemen – make way for VGA!

Okay, Mr. @Xenfomation – How Do I Ditch Cirrus for VGA?

After you create your VM – Windows, Linux, etc – just follow the instructions below and I’ll explain more about video ram, performance, and other questions asked of me later in this final revision.

1. Halt your VM

2. From the command line, find the UUID of your VM:

xe vm-list name-label=”Name of your VM”

3. Taking the UUID value, run the following two commands:

xe vm-param-set uuid=<UUID of your VM> platform:vga=std

xe vm-param-set uuid=<UUID of your VM> platform:videoram=4

Restart your VM and presto. You should now be able to achieve 32bpp at a higher resolution.

Thanks to Mr. Tobias, this has to be stated:

If – for one reason or another – you want to reset/remove these settings as to stick with the Cirrus driver, well – run the following commands:

xe vm-param-remove uuid=<UUID of your VM> param-name=platform param-key=vga

xe vm-param-remove uuid=<UUID of your VM> param-name=platform param-key=videoram

Reboot your VM and presto — back to the stock Cirrus drivers for video (with my sympathies).

The “platform:” value, The Cirrus/VGA Driver, The Video RAM Setting, and The Performance

Yep. By default and design, you will not see this in the “platform:” value for xe vm-param-list, set, etc. I do not know why, but this is yet another reason why I am sharing this with fellow Citrites, Partners, Clients, and Citrix Fans.

Again – when a VM is created the default video driver is “Cirrus” and it uses a total of 4MiB (4 megabytes) of memory. This is fine and all for most individuals, but some want to achieve 32bpp with better resolution. The VGA driver is not something “superior”, but it offers a bit more (as one can see for themselves) in the way of taking one (or all your VMs) so that each session is rich with room, etc.

As for the video ram setting, 16 MiB is the max amount that can be set for the VGA driver. Why? To be quirky and blunt:

– It is 4 times the max of what Cirrus uses

– You don’t need more for 2D graphics

– Because your Ducati Monster does 165 miles per hour doesn’t mean you should always drive it that fast

….. Um, where is this “video memory” being carved out?

Cirrus or VGA, the RAM is being carved out from physical RAM that was allocated for the VM. More specifically, if your VM uses 2GiB of RAM it is actually using: 2GiB (for the VM) + 4MiB (for video – seen by the OS). I KNOW – HEFTY, RIGHT?

….. Performance?

Disclaimer Alert: These numbers are in no way official, scientific absolutes, nor reason for alarm. Sorry, but I have to make it clear these are my testing experiences on my own time, with my own hardware, and on my own operating systems. Besides – every environment is different so the real take-away from the disclaimer is… test it for yourself and see how it works!

For testing, the principle was simple:

– Find 2D-based graphics performance programmes

– Use various operating systems with Cirrus and resolution at 1024 x 768

– Run the 2D volley of tests

– Write down Product X, Y, or Z’s magic number that represents good or bad performance

– Apply the changes to the VM to use VGA (keeping the resolution at 1024 x 768 for some kind of balance)

– Run the 2D volley of tests

– Write down Product X, Y or Z’s magic number that represents good or bad performance

I am happy to say for my home environment and for one office machine you are reading from, there was a very minor, but noticeable difference in Cirrus versus VGA. Cirrus usually came in 10-40 points below VGA at 1024 x 768 (depending on the product and whatever their measuring system was?).

So, thanks for your time, questions, and here is to a bigger picture!

And this is from my Virtual Desktop to you!

–jkbs

@Xenfomation